Solutions

Engine

Core Pipeline

Intelligence Modules

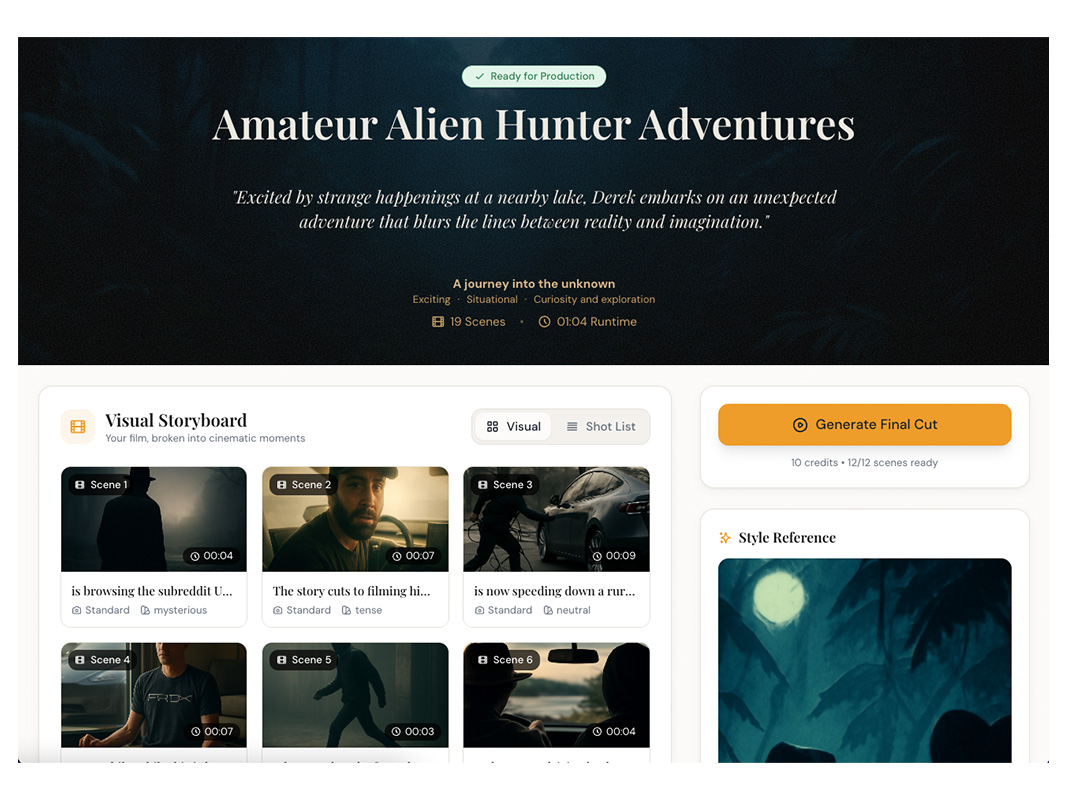

See what deterministic cinematic compilation produces. Every example below was compiled by the engine — 13 intelligence systems working in sequence: StoryCore, BeatMap, DirectorLogic, BioFrame, ProdScale, SRC, PAIL, VAOE, NDPL, MOMA, LO, ID, KI.

Explore how structured story compilation translates narrative intent into production-grade shot specifications, camera grammar, and escalation curves.

Creative Translation

For Human Storytelling

The engine compiles your story into structured shot specifications — deterministic, version-pinned, production-ready.

See how each layer of the Intelligence Engine transforms story into cinematic direction.

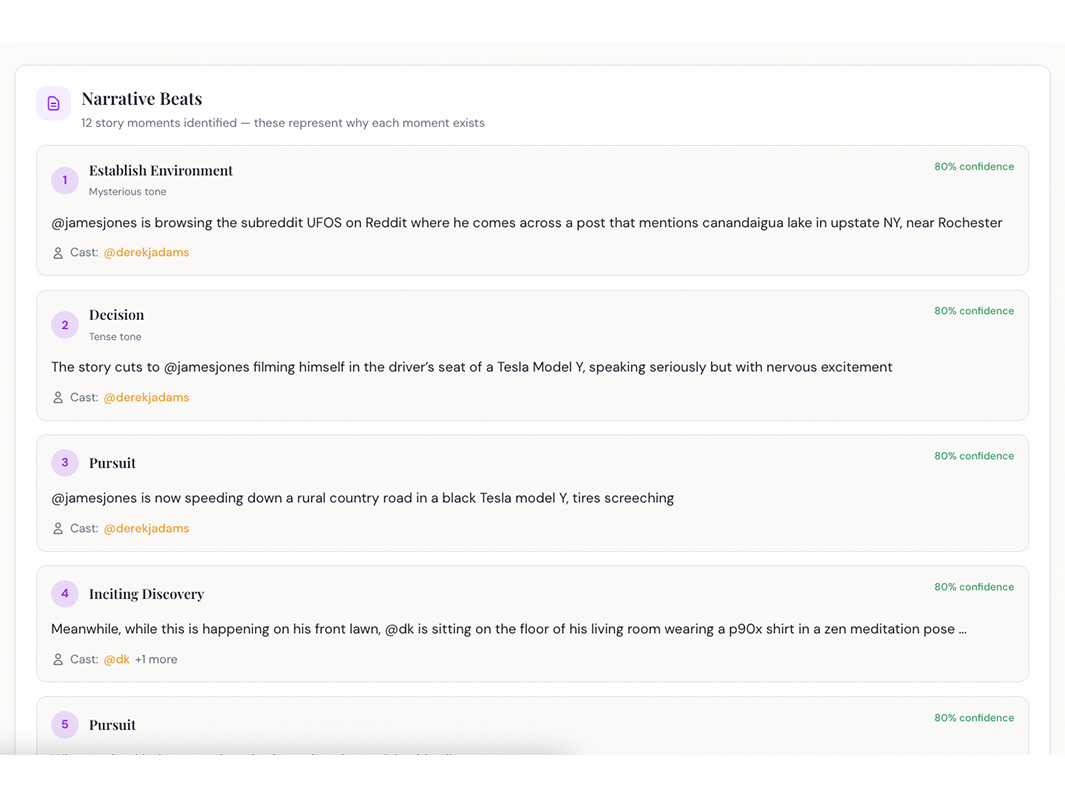

StoryCore™ reads your narrative and determines intent, tone, scale, and constraints. BeatMap™ extracts confidence-scored beats with escalation positions. Together they form the foundation that all downstream modules inherit.

What you're seeing: A narrative transformed into confidence-scored beats with escalation positions and emotional arc mapping.

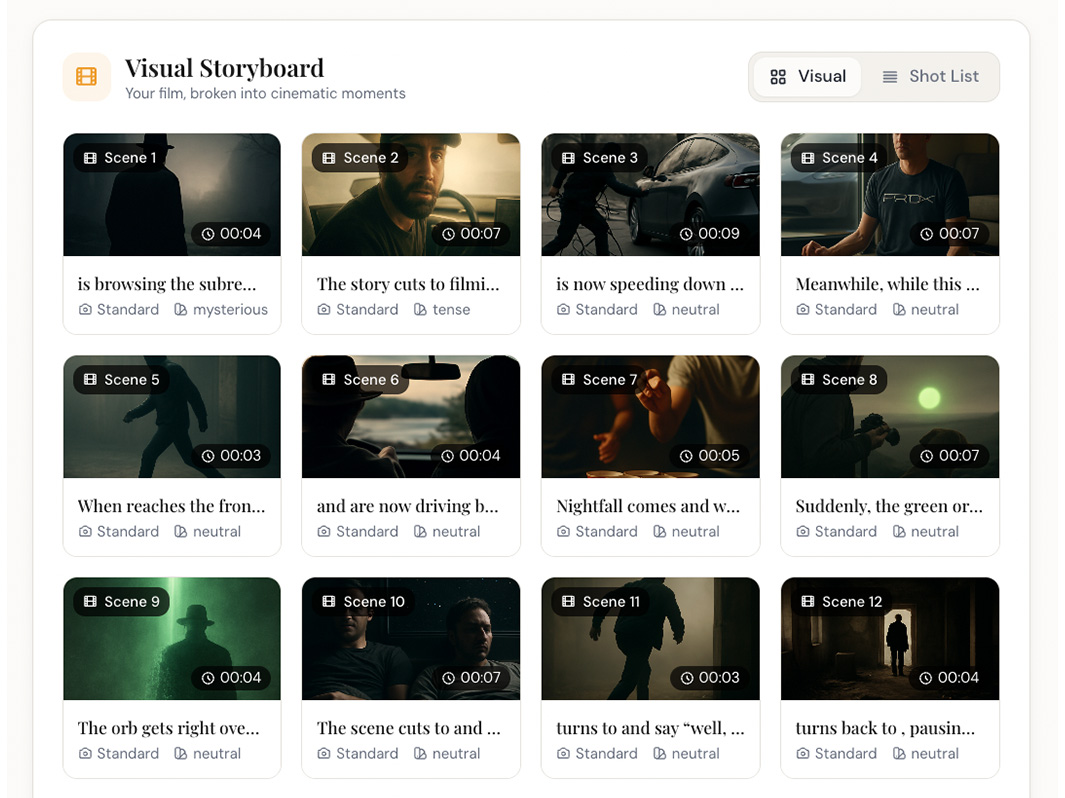

PAIL guarantees cinematic scene entry grammar: ESTABLISH → PRE_ACTION → ACTION. It detects 35+ author pre-action signals or infers environment-first entry from 9 setting templates. Deterministic. No LLM.

What you're seeing: Every scene opens with proper cinematic grammar — environment shell, transition, then action.

DirectorLogic™ is the master orchestrator. It coordinates VAOE™ for blocking, MOMA™ for escalation, LO™ for camera grammar, ID™ for physics, and KI™ for action intensity — all in deterministic sequence.

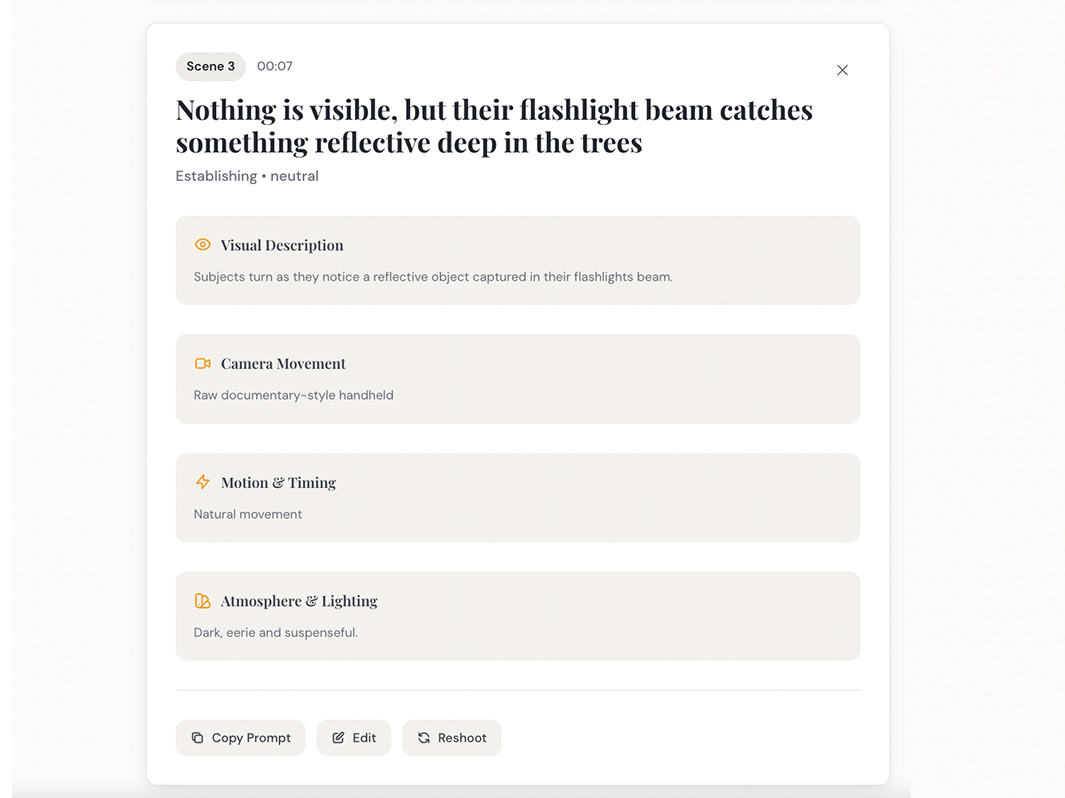

What you're seeing: Complete shot specifications with camera grammar, physics consequences, and escalation positions — compiled from your story.

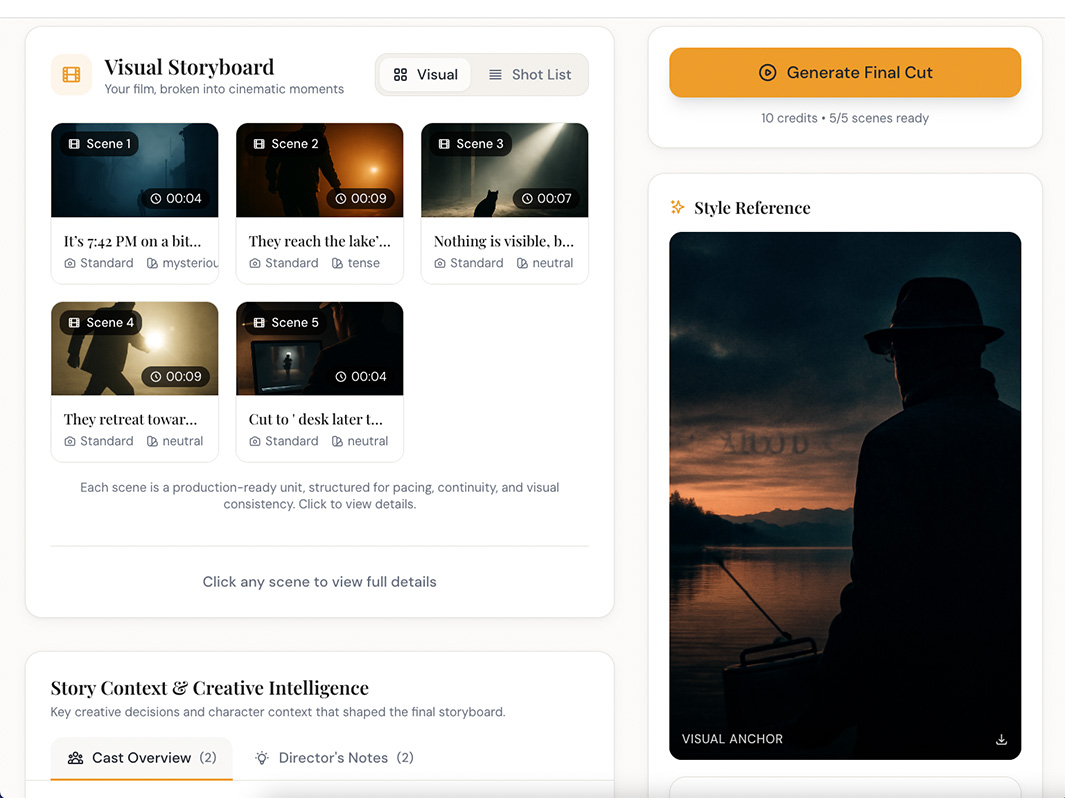

Frame0 ensures your storyboard is a contract. Composition and framing carry from thumbnail to generated video. Framing drift detection with negation-aware keyword matching prevents recomposition.

What you're seeing: Thumbnails that bind to video output — what you approve in storyboard is what you get in generation.

Per-shot physics consequences (trajectory deviation, dust displacement, debris arcs) and story-wide camera grammar distribution (static, push-in, track, handheld). Focal length and stability per shot. Same input, same output.

What you're seeing: Production-grade output with physical realism and camera variety enforced across the entire story.

In the future, Discover will evolve into a living library of opt-in stories, publicly shareable cast members, and reusable creative elements—allowing creators to learn from and build upon great work.

For now, this gallery represents what's possible.

Ready to direct your own story?